AI is no longer confined to isolated use cases. It is embedded in how enterprises operate, decide, and compete.

From recommendation engines to risk scoring models, AI-enabled systems are quietly becoming the backbone of modern business. But as organizations move from controlled deployments to enterprise-wide adoption, a new reality sets in.

Scaling AI is not just about expanding capability. It is about maintaining control as complexity multiplies. And that is where most organizations struggle.

When Growth Outpaces Structure

Early-stage AI initiatives are usually well-contained. They operate within defined datasets, controlled environments, and limited stakeholders. Performance is measurable, risks are manageable, and outcomes are predictable. But scale changes the equation. As AI expands:

- More data sources are introduced

- More models are deployed across functions

- More teams begin to rely on AI-driven decisions

What was once structured becomes distributed. Without a corresponding evolution in oversight, systems begin to drift. Not dramatically, but gradually. And in AI systems, gradual drift is enough to create long-term risk.

Unlike traditional systems, AI does not simply execute logic. It learns, adapts, and evolves. This introduces layers of complexity that are not always visible at the surface:

- Models trained on historical data may lose relevance over time

- Data pipelines may change without clear traceability

- Outputs may vary under slightly different conditions

- Dependencies across systems may not be fully mapped

At scale, these variables interact in unpredictable ways. The challenge is not that AI becomes unreliable. The challenge is that it becomes harder to understand and govern.

Where Control Typically Breaks Down

Loss of control is rarely caused by a single failure point. It emerges from gaps that build over time. Common patterns include:

- Fragmented model ecosystems with no unified lifecycle management

- Limited visibility into how decisions are being generated

- Delayed response cycles when performance issues arise

- Disconnected ownership between data, engineering, and business teams

These are not technical shortcomings. They are structural ones. And they directly impact the enterprise’s ability to scale AI with confidence.

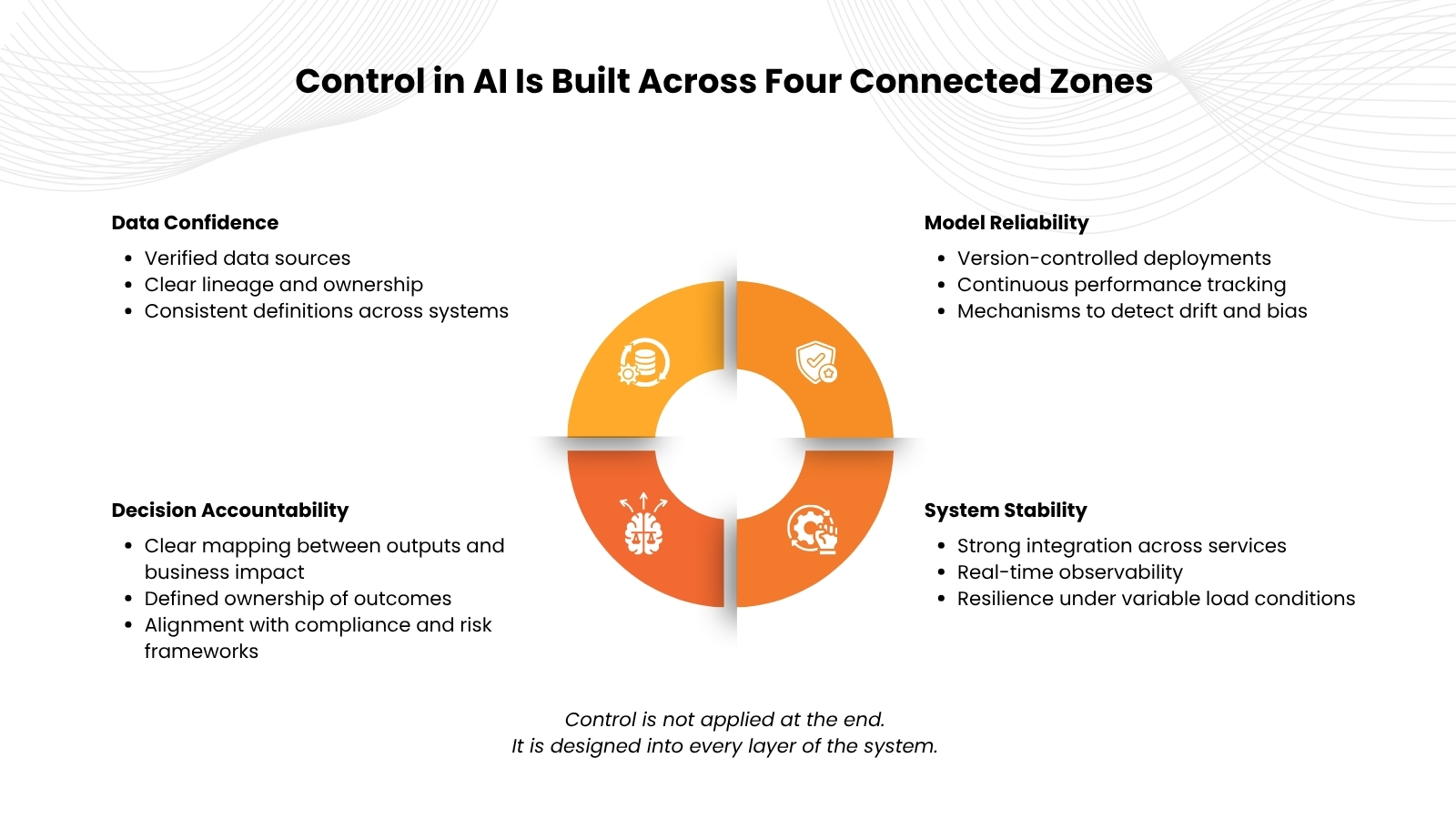

A More Practical Way to Think About Contro

Control in AI systems is often misunderstood as restriction. In reality, it is about clarity and consistency. A controlled AI environment ensures that:

- Every model can be traced back to its source and purpose

- Every decision can be explained when needed

- Every system behaves predictably under stress

It is less about limiting what AI can do and more about ensuring that what it does is reliable, repeatable, and aligned.

Design Principles for Scaling with Control

Enterprises that successfully scale AI-enabled systems tend to follow a few consistent principles.

Design for Evolution, Not Just Deployment

AI sits at the intersection of multiple functions. Without alignment between data teams, engineering, and business stakeholders, control breaks down quickly. Clear ownership and shared accountability are essential.

From Controlled Systems to Confident Decisions

When control is built into AI systems, the benefits extend beyond operations. It directly impacts how organizations make decisions.

- Leaders gain confidence in AI-driven insights

- Teams rely on consistent outputs across use cases

- Risks are identified and addressed proactively

- AI becomes a trusted layer of the business, not a black box

This is when AI transitions from a technical capability to a strategic advantage.

AI maturity is often measured by how many models an organization has deployed or how advanced its algorithms are. But that is only part of the picture. True maturity is reflected in the ability to:

- Scale systems without losing visibility

- Maintain consistency across environments

- Align AI outcomes with business objectives

In other words, maturity is not about how much AI you use. It is about how well you can control it.

Conclusion

Scaling AI-enabled systems is inevitable. Losing control is not. The difference lies in how intentionally systems are designed, how clearly responsibilities are defined, and how deeply governance is embedded into the ecosystem. Because at scale, AI is not just about intelligence. It is about discipline.

Scale AI without compromising control, clarity, or confidence.